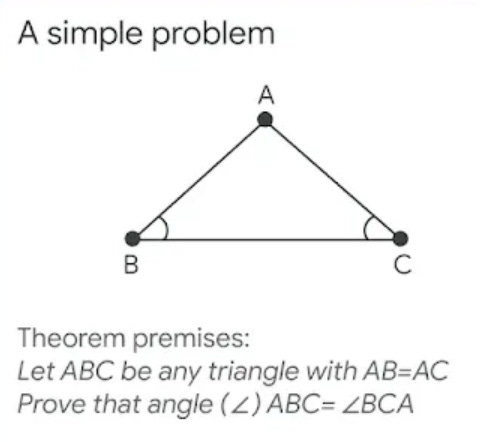

In a blog post released today, Google DeepMind describes their new system, AlphaGeometry, an AI model that does only one thing quite well. For most (including myself), geometry proofs are a painful memory from high school. Even having gone on to take many more math classes, geometry always felt like a side quest that I skipped, never really learning it. I don’t even know where to begin with the most simple problem they use as an example:

I gave the above image to ChatGPT 4 and asked it to generate a proof. It was able to interpret the image quite well (which I still find to be a bit mind blowing), but the proof it gave felt lacking:

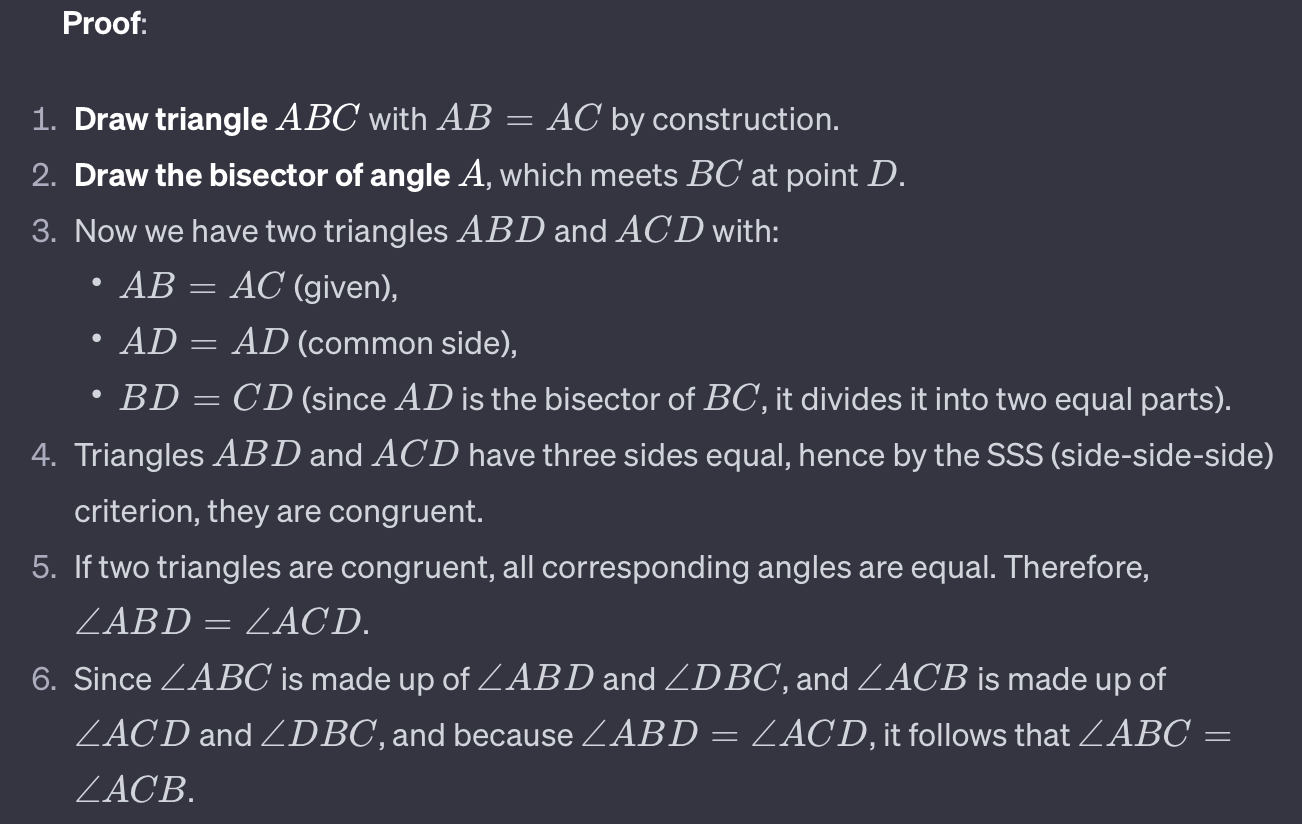

While correct, using the properties of an isosceles triangle as a given seems like cheating. I followed up by asking it to not use isosceles triangle properties as a given and it responded with a more detailed proof:

The ability of a generic LLM to reason through this domain specific problem is impressive, but there are some downsides. Prompting the model to do so was not trivial and took multiple steps, even for this very simple example. Also the generated proof is not easily verifiable (and LLMs are known to hallucinate). A human with sufficient knowledge of geometry might read it and be satisfied, but it would be difficult for a computer to do so. In contrast, the proof generated by AlphaGeometry appears a bit more machine readable:

This image from the blog post exists to be illustrative to humans, but you can begin to see what looks like a more structured, symbolic representation that a computer could reasonably parse and generate. Let’s take a bit of a jump and look at how another simple example problem is actually expressed and input into the model:

orthocenter

a b c = triangle a b c; d = on_tline d b a c, on_tline d c a b ? perp a d b cThis problem, titled “orthocenter”, specifies some existing conditions (everything before the question mark), and a goal to prove (what’s after the question mark). Fully understanding what this problem is specifying requires diving into the code on GitHub at google-deepmind/alphageometry, but you can probably start to understand a bit of it, and we start to get a glimpse of how this model works under the hood.

AlphaGeometry is a neuro-symbolic system made up of a neural language model and a symbolic deduction engine, which work together to find proofs for complex geometry theorems.

One half of AlphaGeometry is a language model, like ChatGPT. The other half is a “symbolic deduction engine”, which might sound fancy but can probably be considered a much simpler technology. It has the capability to take a state defined by the problem, basic axioms of geometry provided to it, and to search the resulting graph for a solution that arrives at the desired end goal with the path along the way being the step by step proof. The default set of axioms or “rules” AlphaGeometry uses are defined here. One example looks like this:

perp A B C D, perp C D E F, ncoll A B E => para A B E FThis says that if you know the following three conditions:

Lines A-B, and C-D which are perpendicular to each other

Lines C-D and E-F which are perpendicular to each other

The points A, B, and E are not collinear (not on the same line)

Then you can deduce that the lines A-B and E-F are parallel. If a given problem satisfies the first three conditions, then the deduction engine can use this rule to now know a fourth condition, which combined with additional rules might eventually lead to a condition which satisfies the desired proof. Many times however, there will simply be no path to a solution from the conditions of a given problem, with the rules provided. This is where the language model comes in.

Let’s go back to the solution to the original example:

Anywhere you see a ⟹ symbol is a “rule” that was provided to the deduction engine. Given the conditions on the left, which are known to be true, the condition on the right is also implied to be true. Note that the path to a solution was only possible with the construction of a new point D, defined in some specific way. This provided the conditions that are highlighted in blue on the second and third lines and allowed for the deduction engine to find the path of a proof which did not exist without it. The challenge lies in the fact that there are infinite constructions which might be tried, most of them not helpful. Instead of arbitrarily creating a new point D that was a midpoint of BC, it might have been a midpoint of AB, which would not have led to a proof found by the deduction engine.

Deciding which constructions to try is what the language model is responsible for. Instead of trying a bunch of random ideas from the infinite set of possibilities, a language model is constructed with the goal of providing actually helpful ideas based on the text of the conditions that are already known. It is the combination of the language model’s creativity, and the deduction engine’s grounded reasoning which ultimately find a proof.

It is this combination of systems that are novel in the AlphaGeometry system. Language models have shown to have incredible capability to provide creative and useful outputs, but they are also known to hallucinate, that is to have outputs which are factually incorrect. In contrast, the deduction engine is a system which if programmed without errors, will always be correct. If a solution exists given the known information and allowed rules, it can exhaustively search for it, and will result in a valid proof. The deduction system is limited in the ways in which it can search though, it will not construct new conditions that expand the graph into having a solution. The two work in harmony here to provide an end result that is much more capable than a deduction engine alone, while still retaining its grounded correctness.

Also of interest in the development of this system is the way in which training data for the language model was generated automatically without needing humans to ideate a bunch of geometry problems and corresponding proofs as input. I recommend reading the original blog post for details of that process.

For more details of the AlphaGeometry system, you can look at their paper published in Nature. Also of interest is another article from last month by DeepMind in which they describe additional cases of using LLM’s to generate creative discoveries in mathematics by grounding them with objective evaluations.